AWS: Lambda (Serverless)

Long time no see, I guess, but now I am back to posting more often over here. Today’s topic focuses on an interesting technology: the serverless.

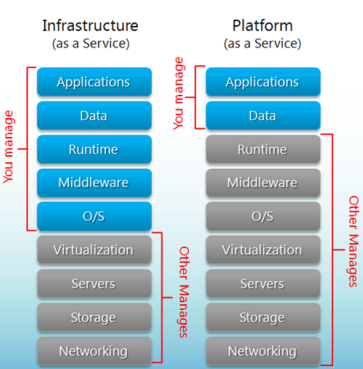

Serverless and containers (or in their very popular form, docker) have been buzzwords throughout the last couple of years and while the containers took off finally, though many people are still migrating or evaluating, the serverless did not enjoy quite the same success. There is a lot of people talking about it but yet few who had the courage to actually use serverless architecture on large scale in production systems.

Now, the two big cloud providers provide serverless architecture: Azure in the form of Azure functions and AWS in the form of AWS Lambda. I haven’t actually had the chance to try Azure functions but I have heard that they are somewhat better than AWS, will definitely test that out. On AWS platform I have tried it about 2 years ago and then just a few weeks ago and I have some thoughts to share from the perspective of a developer.

These days you can create AWS lambdas from scratch, from predefined blueprints or a repository of functions submitted either by AWS, individual users or companies. That wasn’t the case two years ago. You could only do it from scratch or from blueprints provided by AWS which were definitely helpful but the addition of a repository of lambdas is a nice one. Pretty much anyone can submit their lambda to allow others to use them provided they follow their guide for publishing linked here.

Assuming you are going to start your lambda from scratch, you can choose a name for your function, the runtime which can vary between: C# (.NET Core), Java, Go, Node, Python. That wasn’t the case two years ago, you could at most choose between Java, Python and Node.js so definitely we can see improvements there.

After you’ve chosen the runtime, you can choose to create a role from scratch or from a template which essentially is a list of access policies that this lambda is allowed to perform. No worries, you can change all of these later as well, which is great!

When you are all done with all that you’ll be faced with a screen which allows further configuration of your lambda function, although the lambda is already created. So, how the lambda works is that it needs to be triggered by some sort of an event or API call and then do something defined in the code of the lambda. On the left hand side you’ll have listed all the types of events the lambda can react to (e.g. API calls from API Gateway, S3 events: file creations, deletions etc). As soon as you click on one of them you are prompted with the configuration of that event, maybe it make sense to cover some of these events in future posts. Under the lambda, on the right side you’ll be able to see the access policies you’ve previously configured and although this can be changed, you must go to IAM service to do it which is not extremely intuitive, especially since there is no link on this page to guide you.

Next is a nice little editor which you can customize to your liking and also you can set the entry point for your AWS lambda. The sad part with this editor is that will stop loading once your package gets too big (>3 MB) which can be exceeded easily as your Lambda must contain all dependencies or libraries, however your lambda should never exceed 50MB which is reasonable I think. Then you have the chance to set environment variables which can be quite useful if you want to distinguish for instance, production lambdas from test lambdas.

The important part!

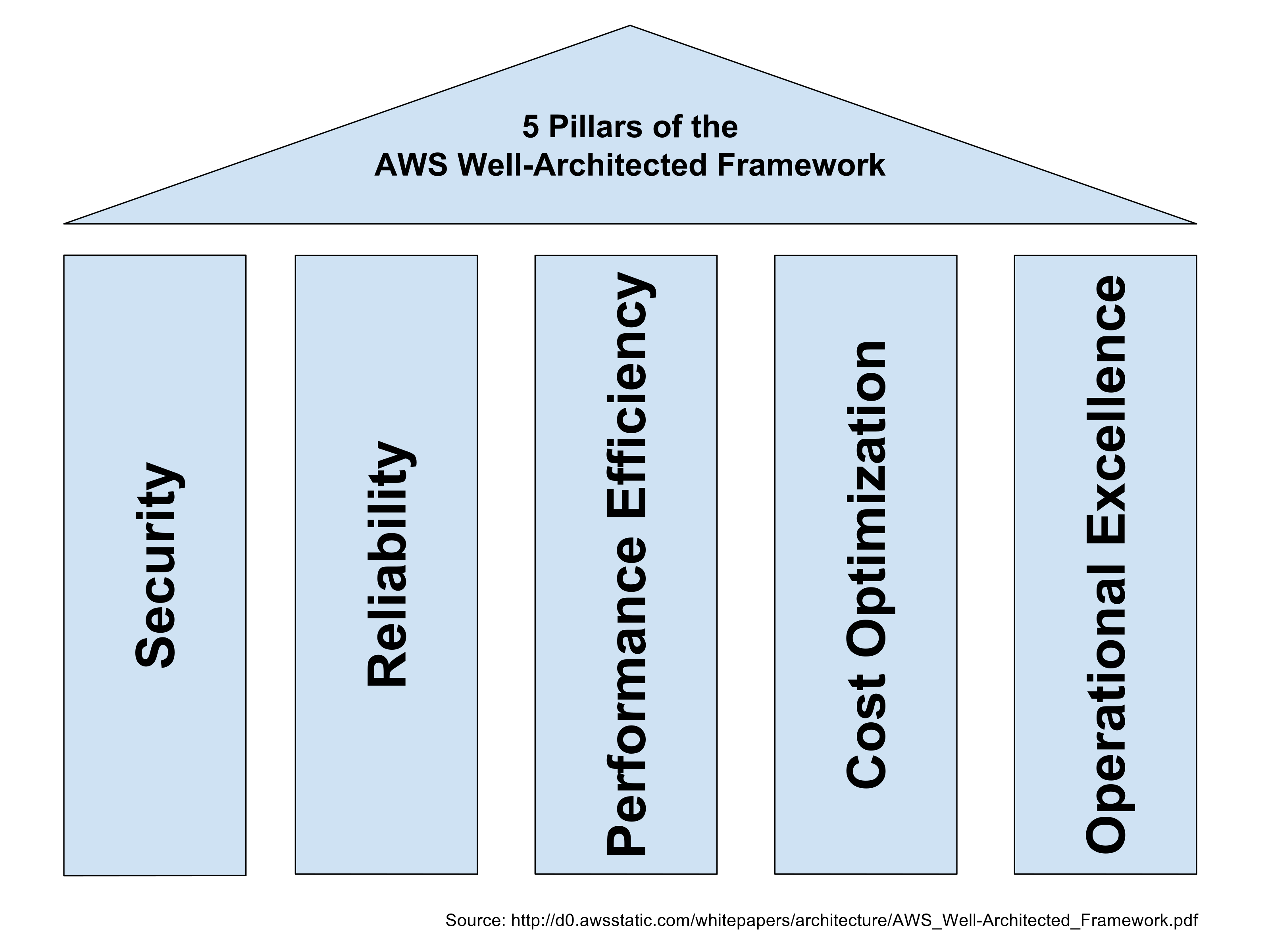

So now you get to configured the physical resources allocated to your lambda. Note that so far you didn’t choose any OS, CPU, RAM, Network config, Drives or anything. You can choose your RAM, maximum execution time (Timeout), VPC, concurrency, and send alerts in case the maximum of retries is exceeded (will talk about that).

- RAM: you can select up to 3GB of RAM. The CPU is scaled proportionally. This part is not very transparent, but one thing to note is that around 1.5GB memory, AWS will add another core, so if your code is single threaded it won’t be able to take advantage of it. This is however a huge improvement from 2 years ago. Back then you couldn’t go more than 256Mb or 512Mb, can’t remember exactly.

- VPC: The Virtual Private Cloud (VPC) acts like a logical division of the network in AWS, which you could VPN into and extend a corporate network into, in case for instance that you Lambda needs or calls internal corporate resources. This wasn’t available at all 2 yeas ago. Though I have heard this has significant performance drawbacks, adding more than 10 seconds to your execution time due to adding ENIs (Elastic Network Interface) to your Lambda.

- Maximum execution time: The maximum execution time is now set to 5 minutes, up from 2 minutes 2 years ago. Big improvement but still not enough for some long running tasks. Azure supports 10 minutes maximum.

- Concurrency: Here you can limit the amounts of AWS lambda that can run in parallel. After you exceed this limit, the execution will be throttled so all invocations will fail, an alert will indicate this on Monitoring tab of your Lambda. This can be useful in number of ways depending on your needs (e.g. prevent unauthorized trigger of the lambda, DOS attacks)

- Alerting which can either publish a message in SQS or send an email via SNS when the maximum of retries is exceeded.

- Default temporary disk space is set to max 512 Mb.

- Body request size for API calls cannot exceed 6 Mb, while for events can not be larger than 128 Kb.

So, all in all, there’s been lots of improvements of the last 2 years but there are still some problems that are left unsolved:

- There is a limitation in the resources you can choose which is kind of low. The lack of transparency about CPU power and also network bandwidth is a bummer as some people would like more control, myself included.

- The execution time is limited to 5 minutes, but you can go around if you really need to as you do have access to the remaining time allocated for the Lambda execution (e.g. if you are processing large sets of data). I will share an example Lambda shortly.

- If a Lambda execution failed, it will be automatically retried up to 2 times with some delay in between, but there is no way to configure this. It’s definitely not ideal, for instance in the case that a lambda times out, it will be retried and you might get duplicate data, depending on what your Lambda does. You can make the lambda always succeed in the code. I will include this in the example.

- Cold starts suck. Those are basically the first executions of the Lambda which will be slower as subsequent execution will reuse the initial Lambda to avoid this. However if you are bursts of traffic around certain times then you’ll have more concurrent Lambdas therefore more cold starts (e.g. food ordering service)

- Cloudwatch logs are not the most nice to look at.

- You kinda need to design with AWS Lambda in mind. So if you think that you could just migrate your code from your ordinary EC2/Docker you might need to re-evaluate as Lambdas have only one entry point and in the case of APIs can only host one API.

This will cause locking and could cause migration problems if another technology emerges. - There are some concerns I have around deployments, automated testing and versioning as this can get quite complicated.

Due to these reasons, I think the Lambda has not been widely accepted yet but I think it’s promising, has been doing a lot of progress in the last couple of years and I hope will continue on this path.

Pricing

Lambda pricing is a function between the resources allocated and execution time and I found it quite cheap, though if you are accessing other services like S3 you’ll be billed for those too, so it can spiral out of control easily, as it is easy often the case with AWS.